The Enginuity Digest

Welcome back. Moving away from all the hype around model releases, this week we take a look lower down the AI development stack to focus on semiconductors. The next phase of AI is not just about who has the smartest model, but who can secure the memory, packaging, lithography, cooling and interconnects required to actually run these systems at scale. In other words, the excitement is still in software, but the real innovation is still firmly based in the components quietly building this new world.

Here's what will be covered in today's newsletter:

News Update:

NVIDIA GTC: Insane Chip Revenue, new releases and more

Organic AI: A functional AI built my training 200,000 biological brain neurons to play DOOM

Quantum Battery: the world first proof of concept developed in Australia

Deep Dive: Semiconductors for AI — what they are, why there are different types, and why the world is fighting over them

In Other News: Power, infrastructure, resources and the broader AI buildout

What’s been happening with AI?

The NVIDIA GTC: The Biggest Annual Event in AI (Link) |

GTC 2026 officially kicked off, bringing together every major influencer, VC, and startup to convene and assess the newest AI innovations

The company unveiled its Vera Rubin platform as a full-stack AI system made up of seven new chips, five rack-scale systems and one integrated AI supercomputer.

In a staggering display growth, NVIDIA has achieved with this development 40 million times more compute than what was available 10 years ago.

Initial reports claimed 35x higher inference throughput per megawatt, but internal benchmarks have pushed that figure to 50x

Why this matters: NVIDIA is effectively arguing that the winners in AI will be decided by systems engineering. The glamour is still in the models, but the money is increasingly in the infrastructure that makes those models useful in the real world.

Organic AI: 200,000 neurons master Doom (Link) |

Researchers at Melbourne-based Cortical Labs have successfully trained a "biological computer" to play the 1993 classic, DOOM.

The setup uses induced neurons on a silicon interface, with the game state translated into electrical stimulation patterns and the neural responses decoded back into in-game movement and actions.

This is not some sentient brain in a jar, and it is nowhere near replacing conventional compute. But it is a meaningful demonstration of adaptive learning in a biological-silicon system.

The more interesting angle is energy and architecture. Biological neural systems learn very differently from GPU-heavy AI systems, requiring far less energy. If these platforms continue to grow, they could open an entirely different branch of computing that complements silicon rather than competing directly with it.

Why this matters: This marks a shift toward Neuromorphic/Biological computing, which could eventually offer the "tacit knowledge" and energy efficiency of a human brain at a fraction of the power cost of a GPU. It is early, strange and a little bit unsettling, but biological computing is starting to move from science-fiction headline to engineering platform.

World’s First Quantum Battery Prototype Built at RMIT (Link) |

Researchers from CSIRO, RMIT and the University of Melbourne have demonstrated what they describe as the world’s first proof-of-concept quantum battery that can charge, store and discharge energy.

The basic idea is very different from a conventional battery. Instead of storing energy through bulk chemical reactions, a quantum battery uses quantum interactions between light and matter.

In this prototype, researchers used an organic microcavity structure and laser excitation to demonstrate so-called collective charging effects, where the battery can charge faster as more storage units act together. That is the counterintuitive part: in theory, adding more cells can make charging faster rather than slower.

That said, this is nowhere near ready to power your phone or EV. The prototype stores only a tiny amount of energy and currently retains it for nanoseconds. What has changed is that the team has now shown a functional proof-of-concept at room temperature with a full charge-store-discharge cycle, which is why this is being treated as a genuine engineering milestone rather than just a theoretical paper.

Why this matters: This is not the next lithium-ion battery. It is an early demonstration that a once-theoretical energy concept can now be built, tested and improved which is exactly how major engineering breakthroughs usually begin.

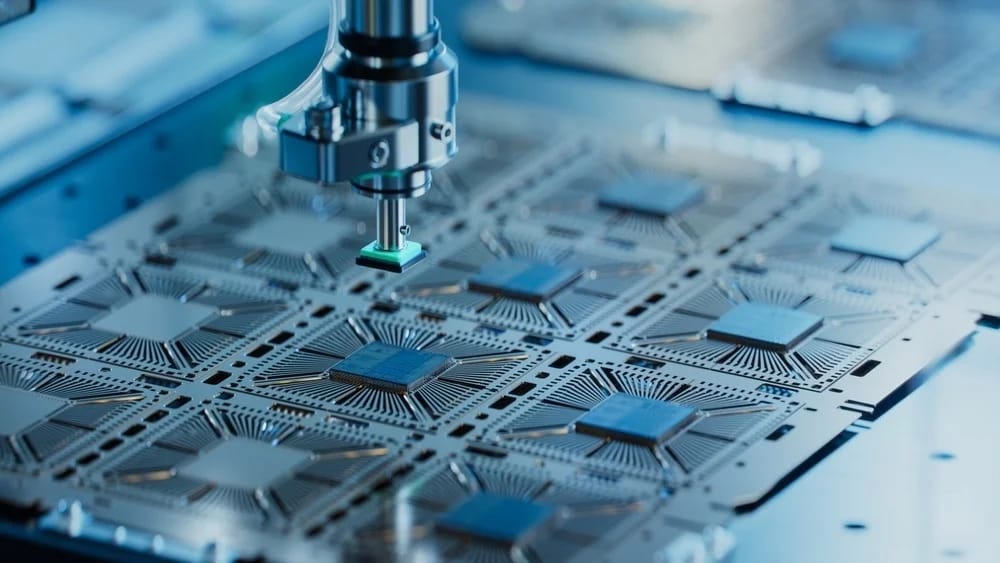

Deep Dive: The Semiconductor Frontier

Semiconductor Growth

Semiconductors are no longer just a technology sector. They are now the physical infrastructure layer beneath AI, electrification, defence systems, telecoms, advanced manufacturing and modern industrial control. If oil defined the twentieth century, silicon is making a decent case for defining the twenty-first.

The market numbers alone are staggering. Global semiconductor sales reached about $792 billion in 2025, McKinsey now estimates the semiconductor market was could reach $1.6 trillion by 2030, with much of that growth tied directly to leading-edge AI chips and high-bandwidth memory.

What is a Semiconductor

A semiconductor is a material that conducts electricity better than an insulator, but not as freely as a metal. That middle ground is what makes it so valuable. It means engineers can control when electricity flows, where it flows, and how strongly. That controllability is what allows semiconductors to act as switches, amplifiers, memory and sensing elements inside almost every modern electronic system.

Silicon became the dominant semiconductor because it is abundant, stable, relatively cheap, and well suited to manufacturing at enormous scale. It is the foundation of the logic chips that run computers, phones, control systems and AI accelerators. But as technology has advanced, it has become clear that no single semiconductor material is ideal for every job.

Types of Semiconductors

The AI story is often simplified down to “GPUs”, but the real stack is much broader.

First, you have logic chips. These are the processors doing the actual computation, whether that is training a model or running inference. NVIDIA’s Rubin GPUs sit in this category, as do TPUs and custom accelerators from the hyperscalers.

Second, and increasingly just as important, you have memory. AI workloads are ravenous for memory bandwidth, which is why HBM has become so strategic. HBM stacks memory vertically and places it very close to the processor, allowing huge data throughput with better power efficiency than conventional memory approaches.

Combining these, you then have advanced packaging. This is the part many people still underestimate. Modern AI systems are not just single chips sitting alone on a board. They are highly integrated packages where compute dies and memory stacks and interconnects are brought together with extreme precision.

Finally, outside AI compute, there are power semiconductors such as silicon carbide and gallium nitride, which are crucial for EV drivetrains, inverters, charging systems and grid equipment. These are different from AI accelerators, but they show why semiconductors are really the control layer for the entire electrified economy, not just data centres.

The Geopolitical Situation

This is where the story gets serious. The AI boom has collided with a supply chain that is both highly specialised and geographically concentrated.

Taiwan remains the indispensable centre of advanced logic manufacturing, with TSMC sitting at the centre of the global leading-edge ecosystem. That concentration has pushed governments to treat semiconductors as a national security issue, not just an industrial one. TSMC’s U.S. investment is now expected to reach US$165 billion, including new fabs, advanced packaging facilities and R&D. That is not normal corporate expansion. That is industrial policy.

At the same time, the United States has continued using export controls to restrict China’s access to advanced-node semiconductor manufacturing and AI-capable computing technologies, explicitly linking those controls to military and AI applications. That means semiconductors now sit at the intersection of trade policy, defence strategy and AI competition

The Takeaway

Semiconductors matter because AI has stopped being purely a software story. Every new model release now pulls on a very physical chain of constraints: foundries, materials, memory, packaging, cooling, transmission lines and geopolitical alignment. The more “intelligent” the world becomes, the more the world will care about who owns the hardware beneath that intelligence.

That is why semiconductors are no longer a niche topic for electronics engineers. They are now core to understanding the future of AI, infrastructure, manufacturing and power systems.

In Other News

Anthropic and the US Department of Defence have had a massive fallout with Anthropic now being named a national security and supply chain risk. Clashes came as Anthropic refuses to allow its AI to be used for mass surveilance or in lethal weapons targeting and firing decisions (Link)

Nvidia unveils the first chip specifically targeted for orbital data centres. What was just a fantasy idea a few months ago is rapidly being seen as a potential solution to AI’s energy problems (Link)

Uber founder Travis Kalanick launches Atoms, which aims to develop robotics in the food, mining and transportation industries. The companies focus is on specialised machines rather than humanoids (Link)

A new feature on “AI in Australia’s next mining revolution” argues that the sector is moving beyond basic automation to full‑stack digital operations, with AI‑driven predictive maintenance, optimisation and decision support now seen as core levers for productivity and safety. (Link)

Global AI energy demand is forcing utilities and engineers to rethink the generation mix, with data centres already hitting 26% of total state electricity use in Virginia and 21% in Ireland – and forecasts that Irish data centres alone could hit 32% of national demand by 2026. (Link)

GlobalData expects Australia’s iron‑ore output to rise 2.6% in 2026 to about 993 million tonnes on the back of ramp‑ups at Onslow, Western Range and Iron Bridge, reinforcing a long pipeline of mine, rail and port engineering work even as some legacy operations head for closure. (Link)

This newsletter seeks to engage and challenge the way engineers see AI and its potential for application in industry. Any thoughts, questions or arguments are welcome! Finally, if you enjoy the content, please refer it on to your friends and colleagues, I would love the support.