The Enginuity Digest

Welcome back to everyone! The break extended a little longer than expected as I focused elsewhere, but we are now back into it. 2026 has begun at full speed with rapid advances in AI capability, shifting geopolitical dynamics around compute and semiconductors, and industrial deployments that are quietly moving from pilot to production.

One of the defining trends of late 2025 is now transitioning into real operational rollout in 2026, being agentic systems. Not just models that answer questions, but coordinated agents that plan, execute, delegate and monitor. Today we’ll break down what this means and why engineers should be paying attention.

Here's what will be covered in today's newsletter:

News Update:

Frontier Models Go Fully Agentic: The latest releases are doubling down on tool use, multi-step reasoning and coordination

AI Enters the Control Room: Real oil & gas refinery deployments are delivering measurable reductions in downtime, emissions and maintenance spend

GPT-5.2 Does Theoretical Physics: GPT-5.2 derives a new theoretical physics result, showing it can generate truly novel ideas.

Deep Dive: The Agentic and Microservice Future

Agents in Application: Tailings Integrity

In Other News:

What’s been happening with AI?

Frontier Models go Fully Agentic (Link) |

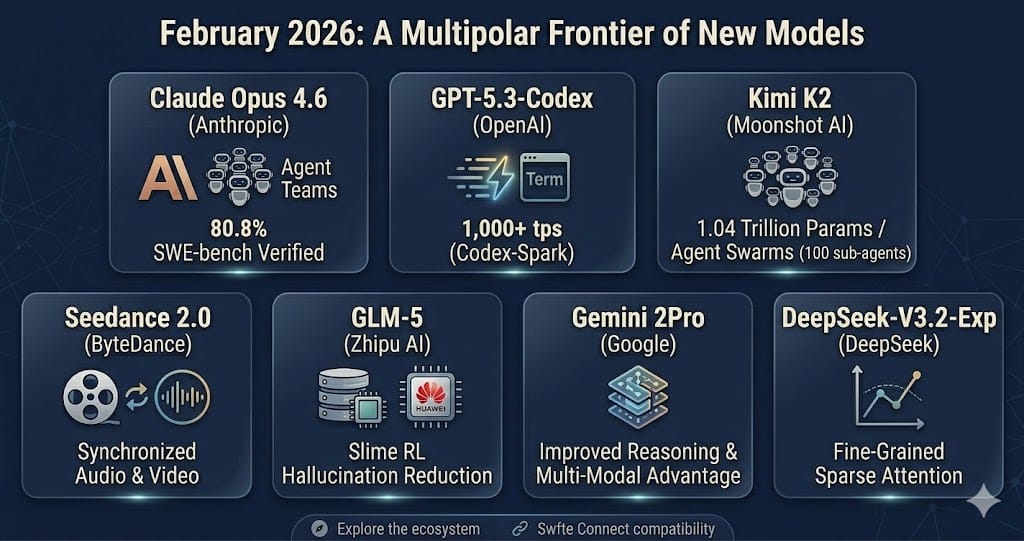

A February roundup highlights seven major model launches and upgrades, including new releases like Gemini 2.5 Pro, GPT‑5.3, Claude Opus 4.6, GLM‑5, Kimi K2 and DeepSeek variants.

Every major release foregrounds agent capabilities: tool use, multi‑step planning, self‑correction and autonomous execution of long, complex tasks (For more info on agents read below).

Claude Opus 4.6 introduces “Agent Teams” coordinating 2–16 agents, while Kimi K2.5 demonstrates “agent swarms” with up to 100 sub‑agents orchestrated over 1,500 steps.

The article argues that multi‑model architectures are now table stakes: frontier models for complex reasoning, and cheaper open models like GLM‑5/Kimi K2 handling high‑volume workloads at 40–170× lower cost with comparable quality.

Why it matters: Organisations are rapidly learning that LLMs perform significantly better when specialised to specific contexts. Orchestrating and connecting these specialists is becoming the space where massive value adds are being unlocked.

AI has Taken Over the Refinery Control Rooms (Link) |

A review of AI in oil and gas refining reports that machine learning based advanced process control and predictive maintenance can significantly cut emissions, downtime and operating costs.

For example, Shell’s implementation across more than 10,000 assets produces and processes 1.2 trillion data points per year, delivering around 20% reductions in unscheduled downtime and 15% lower maintenance spend.

Broader studies show predictive maintenance delivering up to 30% lower maintenance expenditure and 40% less unplanned downtime in refinery environments.

Why it matters: These are hard, plant‑level performance numbers that OT engineers can benchmark against when arguing for AI‑driven APC and predictive maintenance in brownfield refinery and petrochemical assets.

ChatGPT 5.2 Makes an Original Theoretical Physics Breakthrough (Link) |

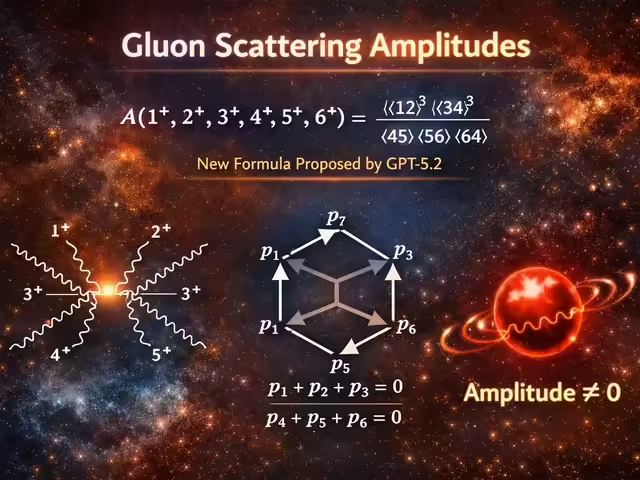

OpenAI announced that its latest iteration, GPT-5.2, has derived a new result in theoretical physics.

The AI tackled a problem in particle physics that was assumed solved, with GPT-5.2 finding the existing answer was wrong and suggesting a correct answer in its place.

A specialized research version of 5.2 autonomously wrote the math proof in 12 hours, verified by physicists from Harvard, Cambridge, and Princeton.

Further to this, in another context, the model successfully solved five out of ten research-level math problems not publicly available (and which too the professors who wrote them months to solve), demonstrating that LLMs are evolving from linguistic predictors into logical reasoners.

Why this matters: Models are rapidly expanding in capability to go beyond assistants to leading at the forefront of scientific discovery. Many have argued that models are not truly capable of “new ideas”, however the evidence against this is becoming harder and harder to counter.

The Agentic and Microservices Future

What Exactly Are AI Agents?

At their core, AI agents are sophisticated AI programs designed to perform specific tasks or achieve defined goals with a degree of autonomy. Think of them as specialized digital employees, each equipped with:

A Goal: A clear objective (e.g., "design a sustainable building layout," "optimize a supply chain," "identify potential structural weaknesses").

Perception: The ability to understand their environment, whether through sensor data, digital documents, or user input.

Planning & Reasoning: The capacity to break down a complex goal into actionable steps, learn from experience, and adapt to new information.

Action: The means to execute tasks, such as generating code, running simulations, controlling robots, communicating with other systems, or producing design proposals.

Memory: The ability to retain information and learn from past interactions and outcomes, continuously improving their performance.

Unlike traditional software that follows rigid instructions, agents can reason and adapt. They don't just execute; they strategise across a collection of steps.

The Power Surge: Why Now?

The rise of agentic AI isn't a sudden leap but rather a convergence of several critical advancements:

Massive Language Models (LLMs): The foundational reasoning capabilities of models like GPT-4, Claude, and Gemini provide agents with incredible understanding, planning, and communication skills. LLMs act as the "brain" that allows agents to interpret complex instructions and generate coherent action plans.

Increased Computational Power: The sheer scale of modern AI infrastructure (like the NVIDIA and Meta "Rubin" initiative we discussed) provides the necessary horsepower for agents to run sophisticated simulations, process vast datasets, and learn continuously.

Tool Integration: Agents are no longer confined to just talking. They can seamlessly integrate with and utilize external tools—CAD software, simulation engines, enterprise resource planning (ERP) systems, robotics platforms, and even web browsers. This "tool-use" capability is what transforms them from thinkers into doers.

Specialized Data & Fine-tuning: As organizations fine-tune LLMs, or link them to specific databases oftheir proprietary engineering data, agents become domain experts. An agent trained on bridge design schematics and materials science data will outperform a general-purpose AI in that specific context.

Microservices for Minds: Orchestrating Agent Teams

Perhaps the most transformative aspect of the agentic future is the concept of Multi-Agent Systems (MAS). This is where the analogy to microservices architecture becomes incredibly powerful:

Microservices in Software: Instead of building one giant, monolithic application, developers break it down into small, independent services (microservices) that communicate with each other. Each service does one thing well (e.g., user authentication, payment processing). This makes systems more flexible, scalable, and resilient.

Microservices in AI Agents: Similarly, instead of relying on a single, all-knowing "super-agent," we can deploy teams of specialized agents.

The "Design Agent": Focuses purely on architectural blueprints and material selection.

The "Simulation Agent": Runs structural integrity tests and fluid dynamics models.

The "Safety Agent": Monitors regulations, identifies risks, and proposes mitigation strategies.

The "Procurement Agent": Manages supply chain logistics, pricing, and vendor communication.

The "Quality Control Agent": Analyzes sensor data from the construction site for deviations.

These agents collaborate, delegate tasks, and even challenge each other's assumptions, forming an autonomous, intelligent "project team."

The Road Ahead

The agentic future isn't about replacing engineers; it's about augmenting them with intelligent, collaborative partners, through human in the loop architectures. It demands a shift in mindset: from controlling every step of a process to defining goals and orchestrating intelligent systems. As these "microservices for minds" become more sophisticated, the engineering world will unlock new levels of creativity, efficiency, ultimately enabling focus to be placed on the new and novel problems.

Agents in Application: Tailings Integrity

Tailings Storage Facilities (TSFs) are some of the most critical assets to manage from a risk perspective. Rather than relying on manual piezometer readings once a month, an agentic microservices approach creates a real-time safety shield.

The Satellite InSAR Agent This agent continuously ingests Interferometric Synthetic Aperture Radar (InSAR) data to detect millimeter-scale ground displacement. It ignores "noise" from seasonal vegetation changes but alerts the squad the moment it identifies a consistent deformation trend in the dam wall.

The Piezometric Agent Focused exclusively on the internal "plumbing," this agent monitors pore pressure sensors embedded in the embankment. It correlates pressure spikes with recent rainfall data, determining if the rise is a normal weather response or a sign of seepage.

The Seismic Agent This agent monitors local geophones for micro-seismic activity or vibrations from nearby blasting.

Surveyor Agent assesses if recent blasts have caused any measurable displacement, ensuring that operational activity isn't compromising structural integrity.

The Risk Reporter Agent (The Communicator) This agent synthesizes the technical outputs from the others into a daily "Stability Confidence Score" and display. If the agents flag anomalies, it doesn't just send an alarm, it generates a report for the Engineering Manager with the exact GPS coordinates and historical data trends attached.

In Other News

BHP is embedding AI across exploration, minerals processing and logistics, using digital models and advanced analytics to stabilise throughput and improve reliability in large, continuous mining operations (Link)

An Australian government fund is backing a A$75 million high‑purity alumina project in Gladstone, positioning HPA as a “precious mineral” for AI hardware and advanced electronics supply chains (Link)

ISG reports that oil and gas operators are rapidly adopting AI‑enabled asset‑performance platforms, digital twins and predictive maintenance to modernise legacy upstream infrastructure and cut downtime (Link)

Marathon Petroleum is showcasing how Dynamic Risk Analyzer software uses AI for autonomous anomaly detection in refinery environments, catching subtle process deviations before alarms and enabling predictive interventions (Link)

A February 2026 Nature study demonstrates an AI‑enabled digital‑twin and Model Predictive Control framework for smart grids that cuts simulated carbon emissions and operating costs by roughly 30% compared with conventional control strategies (Link)

Industry leaders interviewed in Doha describe how AI‑heavy data centres are emerging as a primary new driver of global electricity demand, underpinning massive LNG and gas‑power investments in an “age of energy addition” (Link)

At CES 2026, AMD unveiled its Helios AI rack and MI500‑series GPUs, claiming up to 1,000× AI‑performance gains over MI300X, while Nvidia’s Rubin platform introduced next‑gen GPUs, CPUs and networking for turnkey “AI factory” supercomputers (Link)

Analysts warn that 2026 is the first year of serious AI enforcement, with the EU AI Act entering full force in August and US states rolling out rules on transparency, bias and AI‑generated content, making governance and compliance non‑negotiable for deployed systems (Link)

This newsletter seeks to engage and challenge the way engineers see AI and its potential for application in industry. Any thoughts, questions or arguments are welcome! Finally, if you enjoy the content, please refer it on to your friends and colleagues, I would love the support.