The Enginuity Digest

Welcome back. This will likely be the last newsletter for the year as I take a short break and enjoy the festive season so lets get into it. It's been another week of musical chairs at the top of the AI leaderboard. Google releases Gemini 3.0, OpenAI then counters with GPT-5.2 and Anthropic's Claude is still inching forward on reasoning benchmarks, particularly in coding. The flagship models are locked in a fascinating game of leapfrog where the "best" AI changes every few weeks. To be honest however, for most engineering work, the differences are starting to matter less than the hype suggests.

And the sustainability narrative? It's far more nuanced than many headlines suggest.

Here's what will be covered in today's newsletter:

News Update:

Model Wars Continue: OpenAI releases GPT-5.2 in rushed response to Gemini 3 dominance.

Fusion Gets Real: Tokamak Energy validates complete HTS magnet system, proving commercial fusion hardware works.

Semiconductor Sovereignty: Intel and Tata's $14B India alliance reshapes global AI infrastructure.

Deep Dive: Sustainiability - Training vs Usage

In Other News: Carbon capture milestones, grid transformation, and manufacturing breakthroughs

What’s been happening with AI?

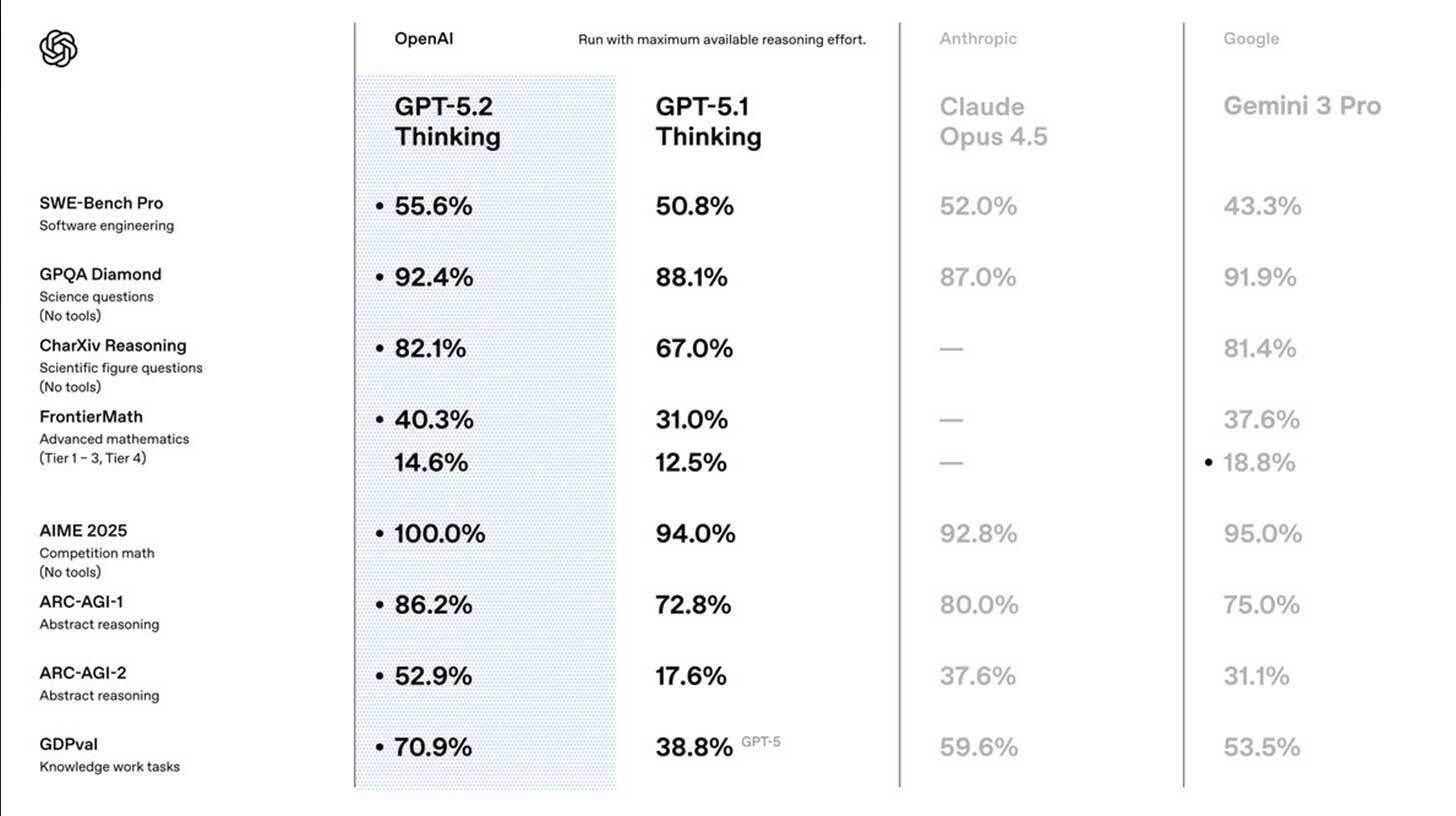

OpenAI Releases GPT-5.2 Model Family in Counter to Gemini 3 Dominance (Link) |

OpenAI released its GPT-5.2 model family as the company's "most capable series yet for professional knowledge work," arriving weeks after internal memos warned the company was losing ground to Google's Gemini 3.

The release includes three tiers: Instant for quick queries, Thinking for complex reasoning, and Pro for maximum accuracy on hard problems.

GPT-5.2 delivers improvements across key benchmarks including reduced hallucinations, enhanced vision and coding, better long-context reasoning, and improved tool use.

On GDPval testing real-world professional tasks, GPT-5.2 Thinking beat or matched industry professionals 71% of the time across spreadsheets, presentations, and document analysis, making it the best existing model for real world scenarios again.

Why this matters: The 71% success rate against professionals marks a genuine capability threshold for engineering workflows—complex calculations, technical synthesis, and multi-step reasoning now deliver reliable results without constant human verification.OpenAI is clearly on the defensive for the first time, but this new release proves they can still compete.

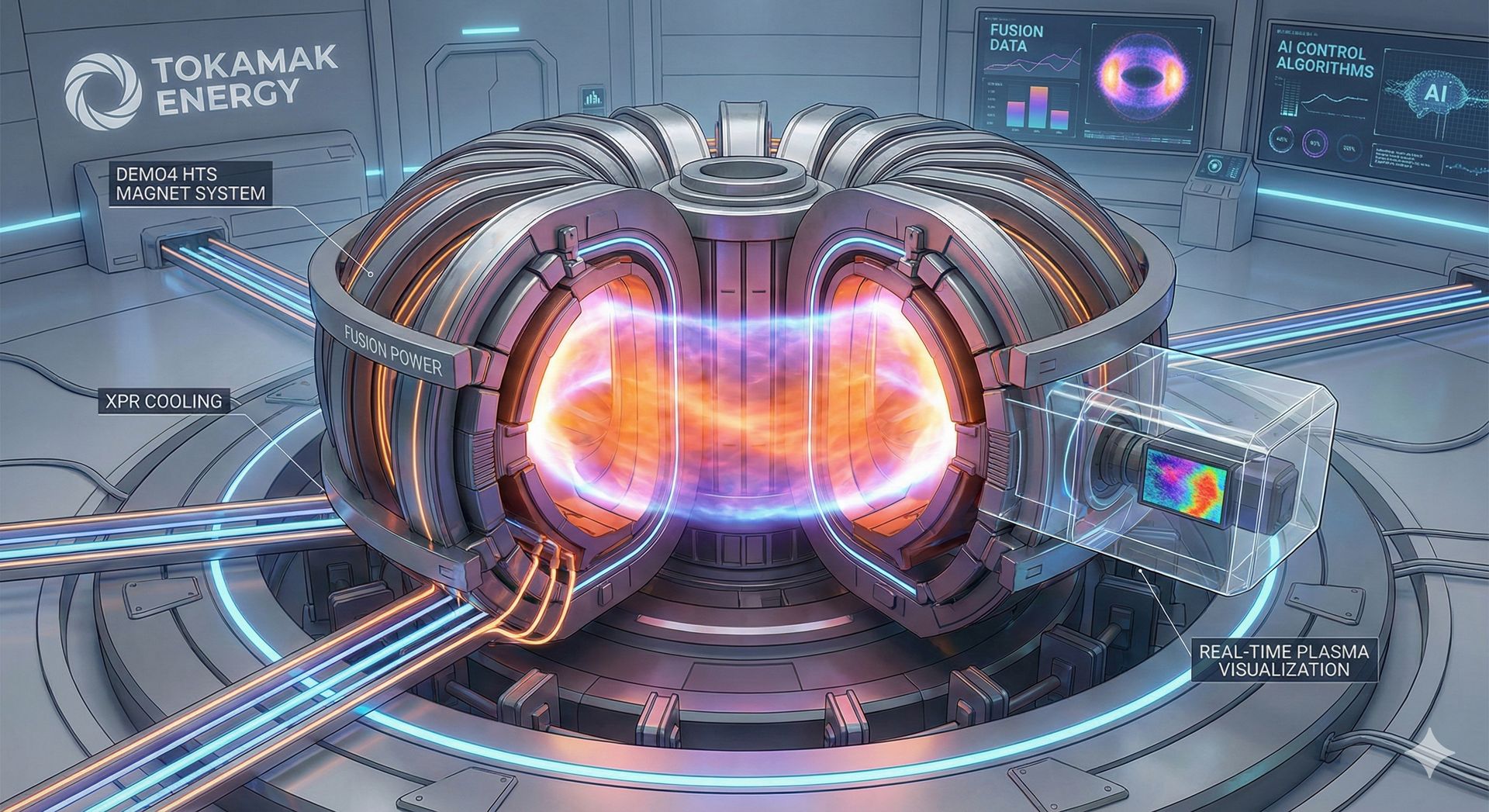

Tokamak Energy Achieves World-First Complete High-Temperature Superconductor Validation (Link) |

UK-based Tokamak Energy successfully reproduced fusion power plant magnetic fields within its complete Demo4 high-temperature superconducting (HTS) magnet system on November 22, 2025—the first instance globally of such fields being validated in a complete setup.

This development represents over ten years of HTS advancement at the company and validates a core technical solution for delivering clean and reliable fusion energy to the grid.

Real-time visualization of lithium-plasma interactions represents a crucial validation step toward enabling this critical technology for commercial reactor longevity.

Why this matters: Fusion has been "20 years away" for a long time, but this breakthrough represents a complete hardware validation, not simulation or theory. For engineers grappling with AI's energy crisis, fusion represents the only energy source that could realistically match exponential compute growth without carbon emissions. The fact that plasma control now relies on real-time AI managing magnetic coils in milliseconds demonstrates the symbiotic relationship between AI development and fusion commercialization.

Intel and Tata Group Form $14 Billion Strategic Alliance for India's Semiconductor Ecosystem (Link) |

Intel Corporation and the Tata Group announced a landmark collaboration on with Tata Electronics investing $14 billion to establish India's first semiconductor fabrication facility in Dholera, Gujarat, alongside an Outsourced Semiconductor Assembly and Test (OSAT) facility in Assam.

The Dholera facility is scheduled for operations by mid-2027, while the Assam OSAT facility could achieve operational status as early as April 2026.

Intel's CEO emphasized this alliance as critical to the company's AI strategy, with the manufacturing facilities themselves incorporating advanced automation powered by AI and machine learning to optimize efficiency.

Why this matters: This isn't just geopolitical hedgingbut provides what's needed for top AI deployment, industrial IoT sensors, automotive autonomy, and all the distributed compute that makes AI useful outside hyperscale data centers. India's move also demonstrates the recursive nature of AI development: the facilities will use AI to manufacture AI chips more efficiently. It's turtles all the way down, and it's happening faster than anyone expected.

AI's Energy Paradox: Why Training Burns the Grid but Inference Doesn't

If you've been following the AI sustainability debate, you've probably heard the apocalyptic projections: AI will consume 10-20% of global electricity by 2030, ChatGPT queries are boiling the oceans, and we're building a technology that will cook the planet before it saves it.

The Training Problem: Real, Massive, but Concentrated

Let's not sugarcoat this: training large AI models is an requires an insane amount of energy. Training GPT-4 consumed an estimated 50 gigawatt-hours (GWh) of electricity, enough to power roughly 5,000 Australian homes for an entire year. Google's Gemini 3.0? Likely around double that. These training runs require thousands of GPUs running at full throttle for weeks, generating so much heat that cooling systems consume 40% of the data center's total power draw.

The math compounds when you consider frequency. OpenAI, Google, Anthropic, and Meta are each training multiple frontier models per year, with each generation requiring exponentially more compute. Throw in the thousands of smaller companies fine-tuning specialized models, and you're looking at a genuine infrastructure crisis.

Here's where it gets really problematic: most training happens in regions with dirty grids. Northern Virginia, the world's largest data center hub (consuming 25% of the state's electricity), sources much of its power from natural gas. Hyperscalers are signing deals to restart retired coal plants and extend the life of aging nuclear reactors just to keep the silicon fed.

The cooling challenge is equally brutal. A single NVIDIA H100 GPU rack generates 10.2 kW of thermal load. Pack 50 racks into a training cluster, and you're dealing with half a megawatt of heat in a space smaller than a tennis court. Traditional air cooling can't handle it and as such Liquid cooling is becoming mandatory, but it comes with water consumption issues—1 to 5 liters of water per kilowatt-hour in evaporative systems. In water-stressed regions like Arizona or Australia's proposed inland data centers, this is highly significant.

The Inference Surprise: Not What You've Been Told

Here's the counterintuitive part that the headlines miss: actually using AI, what's called inference, is nowhere near as bad as the training story suggests.

A groundbreaking study from Google and UC Berkeley revealed that a single ChatGPT query consumes approximately 0.3 watt-hours (Wh) of electricity. That's equivalent to leaving an LED bulb on for 30 seconds. For perspective:

A Google search: 0.3 Wh

One minute of Netflix streaming: 0.8 Wh

A single ChatGPT response: 0.3 Wh

Charging your phone: 5-10 Wh

The study found that inference accounts for only 10-20% of a model's lifetime energy consumption, with training dominating the carbon footprint. Once a model is deployed, serving billions of queries is remarkably efficient because inference doesn't require the massive parallelization that training demands. You're running calculations through a frozen network, not updating billions of parameters simultaneously.

The hardware evolution is accelerating this gap. NVIDIA's latest Blackwell architecture delivers 2.5× better inference performance per watt compared to Hopper. Google's TPU v5e achieves even better efficiency for specific workloads. More radically, startups like Extropic are developing thermodynamic computing architectures that claim 10,000× efficiency improvements by computing with probability distributions rather than deterministic logic (though real-world validation is pending).

Where the Real Opportunity Lies

1. Train Smart, Not Often

Rather than training from scratch, techniques like Low-Rank Adaptation (LoRA), quantization, and model pruning can reduce training energy by 90% while maintaining performance. For engineering firms deploying specialized models (predictive maintenance, process optimization, compliance checking), you should never be training from scratch, instead fine-tuning existing models or augmenting models with external knowledge bases is both faster and vastly more efficient.

2. Engineer the Cooling

This is where mechanical and process engineers have genuine opportunities. Waste heat recovery from data centers (40-80°C) is perfect for district heating, industrial preheating, or absorption cooling. The challenge is co-locating compute with thermal loads, which requires infrastructure planning, not just IT procurement.

3. Measure What Matters

The AI-is-killing-the-planet narrative conflates training (energy-intensive, infrequent) with inference (efficient, continuous). A more useful metric is energy payback: does the AI system optimize operations enough to offset its training and operational footprint?

The Aramco-Yokogawa deployment at Fadhili demonstrates this perfectly. The autonomous control agents were trained once using simulation and historical data, then deployed to run continuously on existing control hardware. The 5% power reduction delivered across the entire gas plant dwarfs the one-time training cost by orders of magnitude. That's the sustainability win: using AI to optimize industrial processes at scale delivers net energy reductions that far exceed inference costs.

The AI energy narrative needs a reset, with it being critical to understand the real sustainability issues that exist with AI, and applying the same rigorous, physics-based engineering principles to its deployment as any other industrial system.

In Other News

Mitsubishi Heavy Industries (MHI) and Worley announced execution phase of the Padeswood Carbon Capture project in North Wales—the first full-scale commercial CCS in UK cement, targeting 800,000 tonnes CO₂ annually by 2029 using MHI's Advanced KM CDR Process (Link)

India's Department of Science and Technology launched its first R&D Roadmap for CCUS on December 2, coordinating with the ₹1 Lakh Crore RDI Scheme to accelerate industrial decarbonization with three new National Centers of Excellence (Link)

GE Vernova secured 84 turbines (6.1 MW each) for two Romanian wind farms totaling ~500 MW, supplying electricity for 110,000 households with deliveries starting 2026 and completion by 2027 (Link)

BloombergNEF reports global grid investment exceeding $470 billion in 2025 for the first time, driven by renewable integration, electrification, and AI infrastructure demands—though IEA estimates investment must double by 2030 (Link)

This newsletter seeks to engage and challenge the way engineers see AI and its potential for application in industry. Any thoughts, questions or arguments are welcome! Finally, if you enjoy the content, please refer it on to your friends and colleagues, I would love the support.